“The idea that Bill Gates has appeared like a knight in shining armour to lead all customers out of a mire of technological chaos neatly ignores the fact that it was he, by peddling second rate technology, who led them into it in the first place, and continues to do so today.”

– Douglas Adams, ‘Biting back at Microsoft’, June 2001

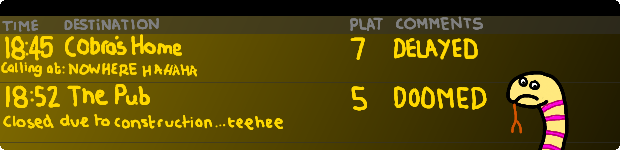

Plankton has stolen the plans for an innovative new user interface paradigm, but he need not hurry; Gary the sea-snail has represented the wheels of progress since around 1976

Um. Yes. In your dreams.

Today’s computer interfaces are a massive compromise. Born in a time when processing power was measured in snails, it was necessary to curtail the user experience to get anything useful out of them at all. Interfaces and software had to be computer friendly, not human friendly. As processing power rose, it became possible to shift the balance a tad in favour of the user with windows, icons, menus and the most incredible paradigm shift of all: the mouse and mouse pointer. Almost overnight, the incredible power of the computer fell into the grasp of the average person.

The mouse and window stuff hit the consumer back in the mid 80s. Since then the average home computer’s power has grown by, wait for it, five orders of magnitude. Yes, that’s right number fans, by more than 100,000 times. My current work machine, a rather sexy MacBook Pro, is the processing power equivalent of about 100,000 original Macintoshes. That amount of Macintoshage wouldn’t actually fit in my house stacked to the ceiling even if I used the garage, loft and broke through into the neighbour’s house too.

With all this power, perhaps you might be Roger Mooring your eyebrows right now as you realise that modern UIs are effectively just an iterative rehash of the 80s experience: before the Internet (to the masses, anyway), before the mobile phone and long before The Simpsons, Ben and Holly’s Little Kingdom and Spongebob Squarepants. Microsoft have given us the ribbon – an ‘innovation’ barely better than that infernal paper clip designed to disguise their own inability to manage layers of complexity – which, along with all the other window decorations present these days, mean you barely get to Dear Sir on an average laptop before needing to scroll the screen. Apple have added easy to easy and users have discovered that the result is, at best, hard but usually just annoying. Take an example that annoyed me yesterday: “Your Mac can’t eject the Volume ‘Time Machine’ because someone is using it.” But who? I don’t know. But the computer knows. And it should tell me. And it should sort it out. In fact, scrub that, it should just sort it out without telling me. Crap like this should be on a need-to-know basis and frankly, I don’t and shouldn’t need to know. This is not me being greedy, this is me expecting a basic level of service from the system that I am using; but, then again, I travel on First Capital Connect’s trains so perhaps I should lower my expectations accordingly.

Many people hoped that when Microsoft’s global operating system monopoly started to crumble, first in the mobile space and then on the desktop, that we’d see the chance for innovation to make some real progress through the cracks and opportunities that appeared… but it would appear that everyone is still decorating the same tree. Let’s face it, ‘touch’ is mostly just same old usual stuff but with the finger replacing the mouse pointer and, at best, this is rolling the un-polishable in glitter, as they say.

The further we go, the more we stay put

The Internet was a clumsy, hard-to-interact-with academic network with systems like gopher, telnet, finger (yes, “finger”. Look it up. But carefully. Just imagine the kind of party where that was dreamt up.) and FTP used to navigate data that was unbelievably hard to find. The World Wide Web transformed that overnight. Believe me: when the next innovation arrives that finally fits the computer view into human terms, it’ll change the way we interact with the Internet at such speed that if you blink, you’ll miss it. And that will be Web ‘2.0’, not what’s shovelled down your throat with that moniker at the moment.

Modern UIs are still more computer friendly than user friendly. The restrictions that forced that are long since gone. We simply don’t work the way computers do. If real life was organised the way your computer is, you’d be exposed to the entire complexity of life everywhere you went. Your brain would explode. Computers have had the power to work the way we do, to adapt themselves to us and our way of lives for quite some time. Whilst we enjoy the trappings and pleasures of a free-market capitalistic economy, one of the more ironic prices we pay is companies’ risk-adverse approach of trying to play the same game – but a little better – as everyone else rather than moving the goalposts far, far away and changing the fundamental nature of winning and losing.

An old joke: “e-mail me two of those please, I need a couple of copies.” It’s side-splitting IT humour with an uncomfortable underlying foundation: our minds work spatially. We’re used to things having a place. Here. There. Somewhere. It’s how we interact with the real world around us, it’s something we learn from birth. It’s something that we’ve been doing since we were merely little fishies taking our first steps onto dry land from the chilly primeval oceans. The fact that computers place a complex network of tripwires around this knowledge is something that history will not remember kindly. Take even the most trivial of examples: I want two copies of a file. Two identical copies. I want to put them in the same folder and for them to have the same name, but I cannot. Why? Because they share the same filename and on virtually 100% of all computers, files are indexed by names. The reason for this is because the underlying database of files, the file-system, is architecturally pretty much the same as the very first ones were back in the 60s and 70s. Yes – you heard me, the reason you can’t do the most basic of human things on a modern computer comes from decisions made before man had landed on the moon and before I was born. And I’m old. Em oh oh en, that spells old1.

Mummified metaphors

Clapped out old metaphors such as “folders” are there to try and add some kind of human-level spatial organisation to something that was designed to be kind to low-powered computers with little or no memory. When one’s computer’s main memory doesn’t even have enough space to store this blog post, one must make compromises. For those restrictions to still be the anchors around the legs of progress in the twenty first century is regrettable and a massive collective embarrassment for everyone involved. Things should have a place. A real place. And we don’t mean the tired old “draw a desktop” type thing, we’re talking about making your computer a physical space or allowing it to seamlessly occupy your physical space with you. Open the door and the whole world is available to you: un-real estate as far as the eyes can see that works the same way that you do, but out of the box.

The most intelligent part of a computer is the person sitting in front of it. It is a sad, sad state of affairs that it remains the single most underused component.

But it won’t be this way forever.

Soon, the only way to harness the incredible computing power we have will be to change the way we design and implement software at a fundamental level. Or, to answer this chap’s tweet, no, the reason why low-resolution TV looks better than hi-resolution gaming is not because we’re optimising the wrong things, we’re doing the wrong things and doing them wrong. Needless to say, I’ve soapboxed this before. Several times.

Say goodbye to keyboards, mice and conventional looking computing devices because soon, computers will be both places you go and companions that go there with you. Computing will be something you experience. Software will adapt itself to you rather than you needing to adapt yourself to it. Or, to put it another way, the entire concept of a “computer” will fade into your everyday life for all but those that create and develop for them.

This isn’t an “if”, it’s a “when”.

Oh, and, if you’ve not already guessed, I know people who are working on it.

–

1 A packet of ready salted crisps to the first person to get this reference. Edit: actually, no, I tried googling it, it’s too easy and my daughter ate the crisps anyway.

2 Responses to Square wheels are better than no wheels at all, right?